Smarter Conversations. Part 2 - Open Dialogs

This post continues the smarter conversations series and today I would like to explore ways of keeping your dialogs open. Previously, in part 1, I showed how to add sentiment detection to your bot.

Waterfall

Prior to 3.5.3, the dialog routing system in the Bot Framework was not very flexible.

Imagine the following dialog:

User >> I’m looking for screws used for printer assembly Bot >> Sure, I’m happy to help you. Bot >> Is the base material metal or plastic? User >>I don't know. Does it matter?

The question that the bot asks about the material will likely be handled as a waterfall:

1 | const builder = require('botbuilder'); |

The user’s response is neither metal nor plastic and the bot would simply reprompt:

The builder.Prompts.choice opens up a new dialog that gets pushed onto the stack and that’s what receives the next message. We will take a closer look in a minute.

Trigger Actions

The routing system was reworked in 3.5.3 and it came with a few important enhancements.

First, you no longer need the IntentDialog to recognize your users’ intents. The UniversalBot now inherits from Library and has its own set of global recognizers:

1 | const bot = new builder.UniversalBot(connector); |

Second, the dialogs can now define trigger actions and be picked up even while another dialog’s prompt is being processed.

1 | bot.dialog('affirmation', [ |

If our bot had an intent recognizer that could understand that the user asked a question instead of answering the metal vs. plastic question, and if we had a way to handle it, we could break out of the waterfall using the

triggerActiontechnique. In part 3 I will show you how a simple history engine can help you attach a sentiment like that to what was happening previously in the conversation and how your bot can intelligently handle such a diversion.

Routing and Callstack

Bot Framework maintains a callstack of the triggered dialog actions. When user utterance triggered the productLookup dialog, the stack only had one item coming into the first function of the waterfall:

1 | *:productLookup |

The builder.Prompts.choice adds another one:

1 | *:productLookup |

In the routing system that came before 3.5.3, the next message would land onto BotBuilder:Prompts, would upset the choice validation logic, and would trigger a reprompt. The newer version does a much better job.

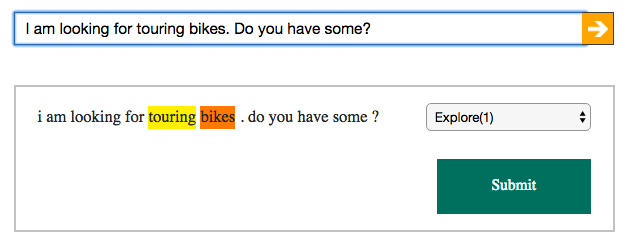

First, the UniversalBot runs the incoming message through the set of global recognizers. Then the default routing mechanism runs three parallel searches - global actions, stack actions, and active dialogs. In doing so it collects all matching route results and scores them. The best route will then be selected and executed.

Handling Interruptions

The default behavior of launching a new dialog via its triggerAction is to clean up the callstack and start fresh. You can do two things to handle the interruption.

First, you can override the default behavior with onSelectAction. Instead of resetting the callstack you can add the newly triggered dialog on top of it. This would return the conversation back to where it was interrupted at when the newly triggered dialog finishes:

1 | bot.dialog('affirmation', [ |

And you can also attach the onInterrupted handler to the dialog that could be interrupted and message the user about what’s happening.

Open All The Way

And if that was not flexible enough, you can define your own dialog’s behaviors by overwriting begin, replyReceived, and even recognize on your dialogs:

1 | bot.dialog('custom', Object.assign(new builder.Dialog(), { |

I will sure come back to this technique when I show you how to drive your dialogs from metadata and not code. Comes very handy when building product recommendation bots. Stay tuned!