Ecommerce Chatbot

I have published a short screencast about the chatbot that I built and have also shared the code on github.

Enjoy!

I have published a short screencast about the chatbot that I built and have also shared the code on github.

Enjoy!

When your chatbot performs tasks of a personal assistant like scheduling meetings or generating reports, you need to make sure it can understand dates and date ranges.

LUIS has a set of pre-built entities to recognize date and time (builtin.datetime). It will understand when your users say tomorrow, October 1st or next week, for example, and will convert that to a date or a duration. Couple examples:

1 | // tomorrow |

Unfortunately, the only

quarter-based duration LUIS understands right now islast quarter. It doesn’t recognizethis quarter,next quarter, or plurals likelast three quarters.

As you can see, the resolutions are indicative, use different formats, and need to be parsed to get converted to dates and date ranges.

When LUIS detects a datetime entity (e.g. tomorrow) it will send back the resolution along with the extracted entity itself (the word tomorrow in this case).

First, I try to understand what time span the user asked about:

1 | const span = |

Then I parse the dates and durations with moment:

1 | const moment = require('moment'); |

Now we have the date representing the beginning of the period the user asked about. If today was Friday 11/18, for example, and you asked for last three weeks, the date would be Sun, Oct 23 (weeks start on Sunday in US unless you use isoweek with moment).

One date is not enough though for utterances like:

1 | please generate a service cost report for the last two weeks |

Your report generation service/API is likely to require a date range.

LUIS can also understand numbers spelled as digits like 2 or 5 or spelled as words like two or five. A phrase like last two weeks will produce two entities:

1 | "entities": [ |

Last thing I need to do to understand the range, is to extract the number and do the date math:

1 | const moment = require('moment'); |

And that’s it. Now last three weeks is understood as 10/23 - 11/12. And last quarter will be 10/1 0:00 - 12/31 23:59.

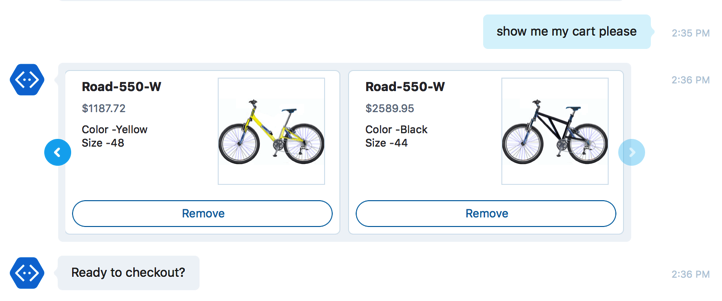

Two weeks ago I attended API Strat in Boston where I gave a talk on cognitive APIs and conversational interfaces and showed and explained an e-commerce chatbot that I built. My presentation is on slideshare. I have learned a lot about chatbots and now I feel an urge to write about it.

My bot is using the intent dialog from the Microsoft Bot Framework:

1 | const bot = new builder.UniversalBot(...); |

The intent dialog associates a user’s intent like Explore or Checkout with a specific dialog that knows how to respond.

It feels very much like routing in a web framework where given a specific URL pattern, the request will be routed to a controller that knows how to handle it.

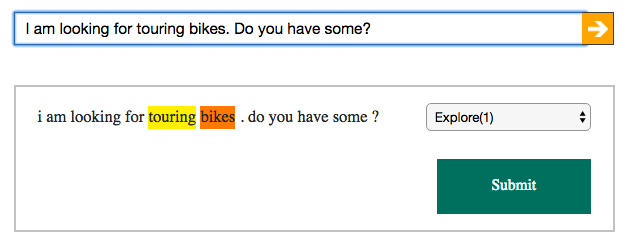

Users don’t spell out their intents like that though. And so the first thing my bot needs to do is to learn to recognize them. The simplest way to trigger a dialog handler in response to a users utterance is by matching it with a regex. A more sophisticated logic requires an intent recognizer.

An intent recognizer is basically a service that can understand users’ utterances. Given a text message it will return a list of intents that it inferred from it along with supporting entities. Here’s how it looks in LUIS (language understanding service from Microsoft):

The Explore intent was recognized along with two supporting entities that I trained it for. Here’s another way of looking at it:

1 | curl -v "https://api.projectoxford.ai/luis/v2.0/apps/{app-id}" |

And the response:

1 | { |

Microsoft Bot Framework comes with built-in support for LUIS in the form of LuisRecognizer

Not every thing your users say has to be sent to a natural language service to extract the intent. Buttons and tappable images can post back bot-specific commands like /show:123456789, for example, that you can easily recognize with a regex. Also, if you want your bot to smile back at a smile sent to it, you don’t need to train a linguistic model either.

It turns out, building your own recognizer is not hard at all. I have built a few for my e-commerce bot and here’s how it works.

First, know that the Bot Framework supports sending a message through a number of recognizers at the same time. You can chain them or run them all in parallel:

1 | const intents = new builder.IntentDialog({ |

The recognizer itself is a very simple interface with only one method - recognize. Here’s how you would detect a smile, for example:

1 | module.exports = { |

And here’s another one that understands commands:

1 |

|

That’s it for now but there is more to come. Stay tuned!